Hyeyung Park

Hyeyung Park is a doctoral student in Distance Education at Athabasca University. Her research focuses on artificial intelligence in education (AIED). She is interested in human-in-the-loop automation in educational systems, with a focus on fostering critical thinking in the AI era. She has lived in Edmonton, Alberta, since 2008. In her free time, she enjoys science-fiction films such as Dune and Star Wars.

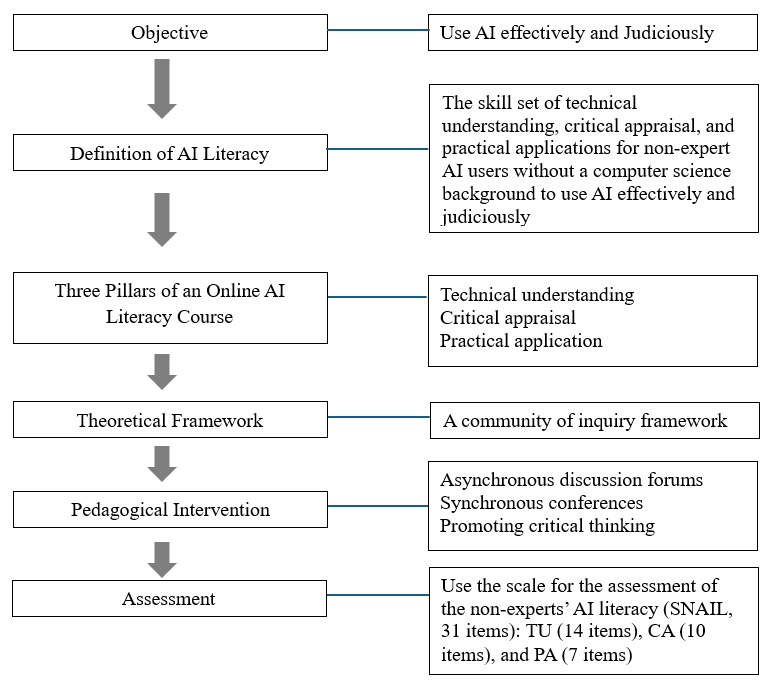

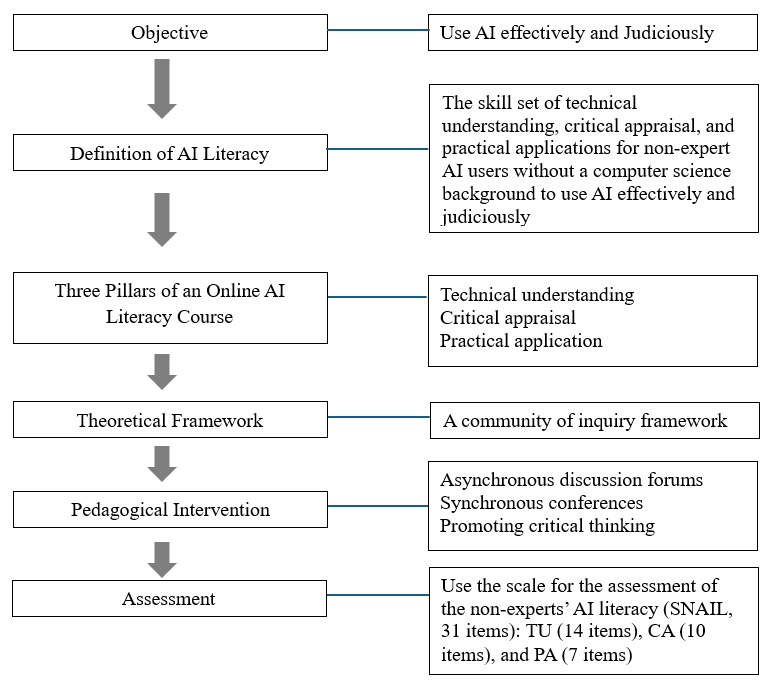

Artificial intelligence (AI) is increasingly embedded in everyday life, reshaping how people think, live, and work. AI outputs include hallucinations because they are likelihoods and probabilities derived from the trained data. The AI outputs reinforce existing biases because AI systems are trained on content that already contains them. AI literacy and critical thinking are crucial, debunking AI hype and enabling people to leverage AI’s potential effectively and ethically. This study is theoretically rooted in constructivism, which holds that individuals actively construct and confirm knowledge. This paper proposes three pillars for an online AI literacy course: technical understanding, critical appraisal, and practical application, which correspond to the three factors of the scale for the assessment of non-experts’ AI literacy (SNAIL). This paper also suggests an instrument to measure learning outcomes for non-expert AI users, serving as both a theoretical framework for AI literacy course design and an educational intervention. A community of inquiry (CoI) framework provides a theoretical foundation for an online AI literacy course and supports critical thinking. Integrating the three pillars of the course into the SNAIL offers methodological and pedagogical benefits by enabling educators and instructional designers to ensure alignment between what is taught and what is evaluated.

Keywords: AI literacy, SNAIL, constructivism, a CoI framework, critical thinking, hallucinations

The rise of artificial intelligence (AI) goes beyond just technological progress, transforming how people think, work, and learn. AI has the potential to free humans from the labour of basic research, allowing them to focus on core, essential questions that foster insight and understanding (Garrison, 2023; Gerlich, 2025; Szmyd & Mitera, 2024). However, AI outputs cannot be relied upon without validation because they are based on probabilities and on statistical patterns trained on past data (Beinborn & Pinter, 2023; Jurafsky & Martin, 2025; Russell & Norvig, 2022). Furthermore, hallucination is an inherent limitation of AI leading to AI outputs that are false, incorrect, or misleading (Gerlich, 2025; Szmyd & Mitera, 2024; Walter, 2024; Zhai et al., 2024). As AI outputs are plausible and convincing, humans can cede cognitive tasks and decision-making to AI systems (UNESCO, 2023, 2024a, 2024b). If AI users do not apply critical thinking when engaging with AI outputs, they might internalize hallucinations or AI-driven misinformation and, consequently, reproduce inaccurate or false information (Garrison, 2023; Hughes, 2024; University of Calgary, n.d.). Furthermore, AI outputs can amplify existing biases because AI systems are trained on content with human biases (Nicoletti, 2023; UNESCO, 2023, 2024a, 2024b; University of Calgary, n.d.; University of Kansas, n.d.). Critical thinking is key to harnessing AI’s potential (Garrison, 2023; Hughes, 2024; Park, 2025; UNESCO, 2023, 2024a, 2024b; University of Calgary, n.d.).

This paper aims to propose three pillars for an online AI literacy course and an instrument to measure learning outcomes for non-expert AI users, serving as both a theoretical framework for AI literacy course design and an educational intervention (Laupichler et al., 2023b; Messick, 1995). This will contribute to the body of knowledge and offer insights for designing an AI literacy course aligned with the assessment instrument.

AI has transformed various aspects of human life, presenting new ways to process information, solve problems, and make decisions. However, hallucinations present a significant challenge for AI users and highlight the need to be aware of them (Zhai et al., 2024). An overreliance on AI can lead to complacency, which is particularly detrimental to the development and practice of critical thinking (Gerlich, 2025; Szmyd & Mitera, 2024; Walter, 2024; Zhai et al., 2024). Incidents involving hallucinations and overreliance on AI outputs have taken place across various levels of society worldwide, including hallucinated legal factums (Charlotin, n.d.), hallucinated corporate reports (Paoli, 2025), a hallucinated newspaper content (Stechyson, 2025), a hallucinated travel policy (Belanger, 2024) and academic misconduct involving AI use (Birks & Clare, 2023; Elali & Rachid, 2023). Therefore, educational intervention is needed to address the negative impacts of AI through AI literacy and critical thinking (Garrison, 2023; Gerlich, 2025; Walter, 2024).

The study is grounded in constructivism, which holds that individuals learn best when they actively construct their own meaning (Bruner, 1961; Dewey, 1910; Piaget, 1950; Saleem et al., 2021; Vygotsky, 1978). A community of inquiry (CoI) framework, which is rooted in constructivism and John Dewey’s progressive understanding of education (Garrison, 2013, 2017; Stenbom & Cleveland-Innes, 2024; Swan et al., 2009). This study uses a CoI framework as a theoretical foundation because it is the most established framework in digital teaching and learning research (Befus, 2016; Caskurlu et al., 2021; Garrison, 2017; Park & Shea, 2020; Stenbom, 2018; Yildirim & Seferoglu, 2021). The framework consists of three interconnected and dynamic elements: Cognitive Presence, Social Presence, and Teaching Presence. Cognitive Presence is “the extent to which learners are able to construct and confirm meaning through sustained reflection and discourse in a critical community of inquiry” (Garrison et al., 2001, p. 11). Social Presence is defined as “environmental conditions such as feelings of trust, open communication, and group cohesion” (Garrison, 2024, p. 28). Teaching Presence is referred to as “the design, facilitation and direction of cognitive and social processes for the purpose of realizing personally meaningful and educationally worthwhile learning outcomes” (Anderson et al., 2001, p. 5). Deep and meaningful learning takes place through three interconnected and overlapping presences in the community of inquiry (Garrison et al., 2000, 2001).

This study aims to provide three pillars for an online AI literacy course, along with an instrument to measure learning outcomes, thereby supporting stakeholders in designing an AI literacy course aligned with an assessment instrument. The literature review situates the study within the literature on the key aspects of the AI literacy course and the evaluation instrument. In the AI era, AI literacy is needed to debunk AI hype, avoid overreliance on and addiction to AI outputs, strengthen AI citizenship, preserve the environment sustainably, maintain human autonomy, and enhance human agency (UNESCO, 2023, 2024a). AI literacy is grounded in human rights because AI affects digital divides, equity, diversity, inclusivity, and accessibility. Therefore, AI literacy should be accessible to everyone. Critical thinking is essential to use AI effectively and ethically (Garrison, 2023; Hughes, 2024; Park, 2025; UNESCO, 2024a, 2024b).

Laupichler et al. (2022) reviewed 30 of 902 articles published since 2000 to define AI literacy for non-expert AI users in higher and adult education. A subsequent study by Laupichler et al. (2023a) developed the SNAIL (scale for the assessment of non-experts’ AI literacy), comprising 31 items on a 7-point Likert scale. The SNAIL’s efficiency, validity, and reliability inform its suitability as an instrument to measure the online AI literacy course and provide empirical foundations for its possible pedagogical effectiveness (FAIH, n.d.; Laupichler et al., 2023a, 2023b, 2024; Si, 2025; Topal et al., 2025).

Laupichler et al. (2022) defined AI literacy as “the ability to understand, use, monitor, and critically reflect on AI applications without necessarily being able to develop AI models themselves” (p. 1). AI literacy is “the set of foundational values, ethical principles, knowledge and understanding that can ensure the proper and effective use of AI by students” (UNESCO, 2024a, p. 27) . This study integrates the definitions of AI literacy from Laupichler et al. (2022) and UNESCO (2024a) because it focuses on AI literacy course design for non-expert AI users. UNESCO is a reputable international organization recognized for its extensive expertise in education, and its recommendations are supported by comprehensive research and global expert input (Chan, 2023). Laupichler et al. (2022)’s definition, derived from a rigorous study, highlights the importance of critical thinking.

A systematic literature review of instruments for AI literacy, conducted through 22 papers with 16 scales, using the PRISMA method (Lintner, 2024). Lintner (2024) identified MAIRS-MS, AILS and SNAIL as existing scales in 2024, but SNAIL appears to be the most suitable for non-expert AI users because it originally targeted higher and adult education. MAIRS-MS was explicitly designed for medical students, whereas AILS, with 12 items and four factors (awareness, use, evaluation, and ethics), poses challenges for ensuring validity and reliability. Therefore, the SNAIL is an ideal AI literacy assessment instrument for non-expert AI users (Si, 2025; Topal et al., 2025), aligning with UNESCO’s definition of AI literacy (UNESCO, 2024a). Table 1 shows the SNAIL with three factors: technical understanding, critical appraisal, and practical application (Laupichler et al., 2023a).

Table 1

Scale for the Assessment of Non-Experts’ AI Literacy (SNAIL)

| No. | Factor | Item |

|---|---|---|

| 1 | TU1 | I can describe how machine learning models are trained, validated, and tested |

| 2 | TU2 | I can explain how deep learning relates to machine learning |

| 3 | TU3 | I can explain how rule-based systems differ from machine learning systems |

| 4 | TU4 | I can explain how AI applications make decisions |

| 5 | TU5 | I can explain how reinforcement learning works on a basic level (in the context of machine learning) |

| 6 | TU6 | I can explain the difference between general (or strong) and narrow (or weak) artificial intelligence |

| 7 | TU7 | I can explain how sensors are used by computers to collect data for AI purposes |

| 8 | TU8 | I can explain what the term 'artificial neural network' means |

| 9 | TU9 | I can explain how machine learning works at a general level |

| 10 | TU10 | I can explain the difference between supervised learning and unsupervised learning |

| 11 | TU11 | I can describe the concept of explainable AI |

| 12 | TU12 | I can describe how some artificial intelligence systems can act in and react to their environment |

| 13 | TU13 | I can describe the concept of big data |

| 14 | TU14 | I can evaluate whether media representations of AI (eg, in movies or video games) go beyond the current capabilities of AI technologies |

| 15 | CA1 | I can explain why data privacy must be considered when developing and using artificial intelligence applications |

| 16 | CA2 | I can explain why data security must be considered when developing and using artificial intelligence applications |

| 17 | CA3 | I can identify ethical issues surrounding artificial intelligence |

| 18 | CA4 | I can describe risks that may arise when using artificial intelligence systems |

| 19 | CA5 | I can name weaknesses of artificial intelligence |

| 20 | CA6 | I can describe potential legal problems that may arise when using artificial intelligence |

| 21 | CA7 | I can critically reflect on the potential impact of AI on individuals and society |

| 22 | CA8 | I can describe why humans play an important role in the development of artificial intelligence systems |

| 23 | CA9 | I can explain why data plays an important role in the development and application of artificial intelligence |

| 24 | CA10 | I can describe what artificial intelligence is |

| 25 | PA1 | I can give examples from my daily life from my daily life (personal or professional) where I might be in contact with artificial intelligence |

| 26 | PA2 | I can name examples of technical applications supported by artificial intelligence |

| 27 | PA3 | I can tell if the technologies I use are supported by artificial intelligence |

| 28 | PA4 | I can assess if a problem in my field can and should be solved with artificial intelligence methods |

| 29 | PA5 | I can name applications in which AI-assisted natural language processing/understanding is used |

| 30 | PA6 | I can explain why AI has recently become increasingly important |

| 31 | PA7 | I can critically evaluate the implications of artificial intelligence applications in at least one subject area |

Note. TU refers to technical understanding, CA to critical appraisal, and PA to practical application. SNAIL by Laupichler et al. (2023a) is licensed under CC BY 4.0.

Given 2500 years of critical thinking history, it is not easy to define it in a single phrase (Paul et al., 1997). Etymologically, the word critical is derived from two Greek roots: kriticos (meaning discerning judgment) and criterion (meaning standards), which implies the development of discerning judgment based on standards (p. 2). Sternberg defined critical thinking as “the mental processes, strategies, and representations people use to solve problems, make decisions, and learn new concepts” (Sternberg, 1986, p. 3). Garrison (2017) defined “critical thinking in terms of cognitive presence operationalized through the practical inquiry (PI) model” (p. 54). The collaborative and reflective process of a CoI framework supports critical thinking as an educational experience. Promoting critical thinking is a primary goal of education (Bailin et al., 1999; Dewey, 1910; DiPasquale, 2017; DiPasquale & Hunter, 2018; Garrison et al., 2000; Hughes, 2024; Lipman, 2003; Pavlis, 2025). Education should “increase the awareness of the individual about their thinking process and how to assess the validity of various ideas, whether they be socially transmitted or generated by the individual” (Garrison, 2017, p. 51). Critical thinking empowers AI users to use AI judiciously (Garrison, 2023; Park, 2025; UNESCO, 2024a, 2024b).

This study is theoretically rooted in constructivism, which holds that individuals actively construct and confirm knowledge within a community and that learning is a social activity (Garrison 2013, 2017; Stenbom & Cleveland-Innes, 2024; Swan et al., 2009). On the other hand, the research design is grounded in pragmatism, which is a philosophical paradigm that evaluates ideas and theories based on their practical outcomes (Cohen et al., 2018; Creswell & Creswell, 2018).

Figure 1

AI Online Literacy Course Design and Measurement

Table 2

The SNAIL with Three Factors and the AI Literacy Course Topics

| SNAIL with three factors | AI literacy course topics |

|---|---|

|

Technicall understanding (TU) (TU1–TU14) |

Narrow and general AI Supervised and unsupervised learning Explainable AI and big data Media portrayals |

|

Critical appraisal (CA) (CA1–CA10) |

AI hype, hallucinations, and bias Ethical risks and societal implications Human role Importance of data Legal issues |

|

Practical application (PA) (PA1–PA7) |

AI application evaluation Technologies supporting AI Importance of AI |

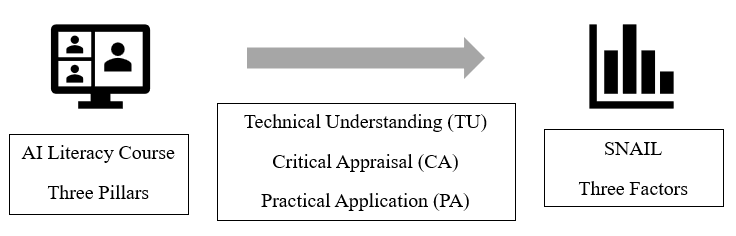

To enhance learning outcomes, this study integrates the three pillars of the AI literacy course into the SNAIL instrument, as shown in Table 2. This provides methodological and pedagogical benefits (Messick, 1995). From a methodological perspective, the SNAIL-based course structure is backed by empirical studies (Laupichler et al., 2023b, 2024; Si, 2025; Topal et al., 2025). It also reduces the mismatch between what is taught and what is evaluated. Most importantly, the assessment results justify evidence-based course improvement and identify each learner's strengths and weaknesses. From a pedagogical perspective, the alignment justifies topic selection by ensuring that each module directly targets an assessed component of non-experts’ AI literacy, thereby supporting coherent curriculum design and transparent expectations for learners (Laupichler et al., 2023b). Figure 2 illustrates the integration of the SNAIL and the AI literacy course. Integrating three AI literacy course pillars with the SNAIL allows instructional coherence and rigorous evaluation, enhancing the credibility and usability of the AI literacy course for non-expert AI users (Laupichler et al., 2022; Messick, 1995).

Figure 2

The Integration of the AI Literacy Course and the SNAIL

This study proposed an AI literacy course design with an assessment instrument as a theoretical framework and an educational intervention. This proposed framework can be empirically tested to evaluate the effectiveness of an AI literacy course or to identify instructional elements that foster AI literacy. By doing so, it will advance the design of the online AI literacy course and confirm the validity of the AI literacy assessment instrument. Since the AI literacy course with a CoI framework, along with the assessment instrument, remains empirically unexplored, the study will contribute to the body of knowledge and present evidence-informed practice to educators, instructional designers, and stakeholders. Furthermore, future research can examine the correlation between the critical appraisal factor in the SNAIL and Cognitive Presence within a CoI framework, as both are closely related to critical thinking.

AI is increasingly integrated into everyday life, reshaping how people think, live, and work. AI outputs include hallucinations because they are likelihoods and probabilities derived from the trained data. The AI outputs also reinforce existing biases, as AI systems are trained on content that contains biases. AI literacy and critical thinking are crucial, debunking AI hype and enabling people to leverage AI’s potential effectively and ethically. This study is theoretically rooted in constructivism, which holds that individuals actively construct and confirm knowledge within a community and that learning is a social activity (Garrison 2013, 2017; Stenbom & Cleveland-Innes, 2024; Swan et al., 2009). On the other hand, the research design is grounded in pragmatism, which is a philosophical paradigm that evaluates ideas and theories based on their practical outcomes (Cohen et al., 2018; Creswell & Creswell, 2018). This paper proposed three pillars for an online AI literacy course and an instrument to measure learning outcomes for non-expert AI users, serving as both a theoretical framework for AI literacy course design and an educational intervention. Integrating the three pillars of the course into the SNAIL offers methodological and pedagogical benefits by enabling educators and instructional designers to ensure alignment between what is taught and what is evaluated.

Anderson, T., Rourke, L., Garrison, R., & Archer, W. (2001). Assessing teaching presence in a computer conferencing context. Journal of Asynchronous Learning Networks, 5(2), 1–17. https://doi.org/10.24059/olj.v5i2.1875

Bailin, S., Case, R., Coombs, J. R., & Daniels, L. B. (1999). Conceptualizing critical thinking. Journal of Curriculum Studies, 31(3), 285–302. https://doi.org/10.1080/002202799183133

Befus, M. K. (2016). A thematic synthesis of Community of Inquiry research 2000 to 2014 [Doctoral dissertation, Athabasca University]. https://dt.athabascau.ca/jspui/handle/10791/190

Beinborn, L., & Pinter, Y. (2023). Analyzing cognitive plausibility of subword tokenization. Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, 4478–4486. https://doi.org/10.18653/v1/2023.emnlp-main.272

Belanger, A. (2024, February 16). Air Canada must honor refund policy invented by airline’s chatbot. Ars Technica. https://arstechnica.com/tech-policy/2024/02/air-canada-must-honor-refund-policy-invented-by-airlines-chatbot/

Birks, D., & Clare, J. (2023). Linking artificial intelligence facilitated academic misconduct to existing prevention frameworks. International Journal for Educational Integrity, 19, Article 20. https://doi.org/10.1007/s40979-023-00142-3

Bruner, J. S. (1961). The act of discovery. Harvard Educational Review, 31, 21–32.

Caskurlu, S., Richardson, J. C., Maeda, Y., & Kozan, K. (2021). The qualitative evidence behind the factors impacting online learning experiences as informed by the community of inquiry framework: A thematic synthesis. Computers & Education, 165, 104111. https://doi.org/10.1016/j.compedu.2020.104111

Chan, C. K. Y. (2023). A comprehensive AI policy education framework for university teaching and learning. International Journal of Educational Technology in Higher Education, 20, Ariticle 38. https://doi.org/10.1186/s41239-023-00408-3

Charlotin, D. (n.d.). AI hallucination cases. https://www.damiencharlotin.com/hallucinations/

Cleveland-Innes, M. F., Stenbom, S., & Garrison, D. R. (Eds.). (2024). The design of digital learning environments: Online and blended applications of the community of inquiry (1st ed.). Routledge. https://doi.org/10.4324/9781003246206

Cohen, L., Manion, L., & Morrison, K. (2018). Research methods in education (8th ed.). Routledge.

Creswell, J. W., & Creswell, J. D. (2018). Research design: Qualitative, quantitative, and mixed methods approaches (4th ed.). Sage Publications, Inc.

Dewey, J. (1910). How we think. D C Heath.

DiPasquale, J. P. (2017). Transformative learning and critical thinking in asynchronous online discussions: A systematic review [Unpublished Master’s Project, University of Ontario Institute of Technology]. https://hdl.handle.net/10155/891

DiPasquale, J., & Hunter, W. (2018). Critical thinking in asynchronous online discussions: A systematic review. Canadian Journal of Learning and Technology, 44(2). https://doi.org/10.21432/cjlt27782

Elali, F. R., & Rachid, L. N. (2023). AI-generated research paper fabrication and plagiarism in the scientific community. Patterns, 4(3), 100706. https://doi.org/10.1016/j.patter.2023.100706

Etymonline. (n.d.). Judicious—Etymology, origin & meaning. https://www.etymonline.com/word/judicious

FAIH. (n.d.). Scale for the assessment of non-experts’ AI literacy (SNAIL). https://faih.org/snail/

Garrison, D. R. (2013). Theoretical foundations and epistemological insights of the community of inquiry. In Z. Akyol & D. R. Garrison (Eds.), Educational Communities of Inquiry: Theoretical Framework, Research and Practice (pp. 1–11). IGI Global.

Garrison, D. R. (2017). E-learning in the 21st century: A community of inquiry framework for research and practice (3rd ed.). Routledge. https://doi.org/10.4324/9781315667263

Garrison, D. R. (2023, May 19). Online learning and AI [Editorial]. The Community of Inquiry. https://www.thecommunityofinquiry.org/editorial41

Garrison, D. R. (2024). A brief history of the community of inquiry framework. In M. Cleveland-Innes, S. Stenbom, & D. R. Garrison (Eds.), The design of digital learning environments: Online and blended applications of the community of inquiry (pp. 26–43). Routledge.

Garrison, D. R., Anderson, T., & Archer, W. (2000). Critical inquiry in a text-based environment: Computer conferencing in higher education. The Internet and Higher Education, 2(2–3), 87–105.

Garrison, D. R., Anderson, T., & Archer, W. (2001). Critical thinking, cognitive presence, and computer conferencing in distance education. American Journal of Distance Education, 15(1), 7–23. https://doi.org/10.1080/08923640109527071

Gerlich, M. (2025). AI tools in society: Impacts on cognitive offloading and the future of critical thinking. Societies, 15(1), 6. https://doi.org/10.3390/soc15010006

Hughes, C. (2024). Critical thinking and generative artificial intelligence. UNESCO. https://www.ibe.unesco.org/en/articles/critical-thinking-and-generative-artificial-intelligence

Jurafsky, D., & Martin, J. H. (2025, August 24). Speech and language processing (3rd ed. draft). https://web.stanford.edu/~jurafsky/slp3/

Laupichler, M. C., Aster, A., Haverkamp, N., & Raupach, T. (2023a). Development of the “Scale for the assessment of non-experts’ AI literacy” – An exploratory factor analysis. Computers in Human Behavior Reports, 12, Article 100338 . https://doi.org/10.1016/j.chbr.2023.100338

Laupichler, M. C., Aster, A., Meyerheim, M., Raupach, T., & Mergen, M. (2024). Medical students’ AI literacy and attitudes towards AI: A cross-sectional two-center study using pre-validated assessment instruments. BMC Medical Education, 24,, 401. https://doi.org/10.1186/s12909-024-05400-7

Laupichler, M. C., Aster, A., Perschewski, J.-O., & Schleiss, J. (2023b). Evaluating AI courses: A valid and reliable instrument for assessing artificial-intelligence learning through comparative self-assessment. ,Education Sciences, 13,(10), 978. https://doi.org/10.3390/educsci13100978

Laupichler, M. C., Aster, A., Schirch, J., & Raupach, T. (2022). Artificial intelligence literacy in higher and adult education: A scoping literature review. ,Computers and Education: Artificial Intelligence, 3,, Article 100101. https://doi.org/10.1016/j.caeai.2022.100101

Lintner, T. (2024). A systematic review of AI literacy scales. Npj Science of Learning, 9, 50. https://doi.org/10.1038/s41539-024-00264-4

Lipman, M. (2003). Thinking in education (2nd ed.). Cambridge University Press.

Messick, S. (1995). Validity of psychological assessment: Validation of inferences from persons’ responses and performances as scientific inquiry into score meaning. American Psychologist, 50(9), 741–749. https://doi.org/10.1037/0003-066X.50.9.741

Nicoletti, L. (2023, June 9). Humans are biased. Generative AI is even worse. Bloomberg. https://www.bloomberg.com/graphics/2023-generative-ai-bias/

Paoli, N. (2025, October 7). Deloitte was caught using AI in $290,000 report to help the Australian government crack down on welfare after a researcher flagged hallucinations. Fortune. https://fortune.com/2025/10/07/deloitte-ai-australia-government-report-hallucinations-technology-290000-refund/

Park, H. (2025). Integrating natural language processing applications into instructional design: Benefits and challenges. Journal of Integrated Studies, 16(2). https://jis.athabascau.ca/index.php/jis/article/view/451

Park, H., & Shea, P. (2020). Ten-year review of online learning research through co-citation analysis. Online Learning, 24(2). https://doi.org/10.24059/olj.v24i2.2001

Paul, R. W., Elder, L., & Bartell, T. (1997). California teacher preparation for instruction in critical thinking: Research Findings and Policy Recommendations. https://eric.ed.gov/?id=ED437379

Pavlis, P. (2025). Teaching critical thinking in the age of artificial intelligence [Doctoral dissertation]. Gwynedd Mercy University.

Piaget, J. (1950). The psychology of intelligence. Routledge. https://doi.org/10.4324/9780203164730

Russell, S., & Norvig, P. (2022). Artificial intelligence: A modern approach (4th ed.). Pearson Education Limited.

Saleem, A., Kausar, H., & Deeba, F. (2021). Social constructivism: A new paradigm in teaching and learning environment. Perennial Journal of History, 2(2), 403–421. https://doi.org/10.52700/pjh.v2i2.86

Si, J. (2025). Exploring AI literacy, attitudes toward AI, and intentions to use AI in clinical contexts among healthcare students in Korea: A cross-sectional study. BMC Medical Education, 25, Article 1233. https://doi.org/10.1186/s12909-025-07766-8

Stechyson, N. (2025, May 20). Chicago newspaper prints a summer reading list. The problem? The books don't exist. CBC News. https://www.cbc.ca/news/world/chicago-sun-times-ai-book-list-1.7539016

Stenbom, S. (2018). A systematic review of the Community of Inquiry survey. The Internet and Higher Education, 39, 22–32. https://doi.org/10.1016/j.iheduc.2018.06.001

Stenbom, S., & Cleveland-Innes, M. (2024). Introduction to the community of inquiry theoretical framework. In M. Cleveland-Innes, S. Stenbom, & D. R. Garrison (Eds.), The design of digital learning environments: Online and blended applications of the community of inquiry (pp. 3–25). Routledge. https://doi.org/10.4324/9781003246206

Sternberg, R. J. (1986). Critical thinking: Its nature, measurement, and improvement. National Institute of Education. https://eric.ed.gov/?id=ED272882

Swan, K., Garrison, D. R., & Richardson, J. C. (2009). A constructivist approach to online learning: The community of inquiry framework. In C. R. Payne (Ed.), Information technology and constructivism in higher education: Progressive learning frameworks (pp. 43–57). IGI Global.

Szmyd, K., & Mitera, E. (2024). The impact of artificial intelligence on the development of critical thinking skills in students. European Research Studies Journal, 26(2), 1022–1039. https://doi.org/10.35808/ersj/3876

Topal, A. D., Gökçe, A. T., Eren, C. D., & Geçer, A. K. (2025). Artificial intelligence literacy scale: A study of reliability and validity for a sample of Turkish university students. Journal of Learning and Teaching in Digital Age, 10(1), 58–67. https://eric.ed.gov/?id=EJ1459888

UNESCO. (2023). Guidance for generative AI in education and research. https://doi.org/10.54675/EWZM9535

UNESCO. (2024a). AI competency framework for students. https://doi.org/10.54675/JKJB9835

UNESCO. (2024b). AI competency framework for teachers. https://doi.org/10.54675/ZJTE2084

University of Calgary. (n.d.). AI literacy and critical thinking [PDF]. https://ucalgary.ca/live-uc-ucalgary-site/sites/default/files/teams/23/AI%20Handouts/AI%20Literacy/AI-literacy-and-critical-thinking_0.pdf

University of Kansas. (n.d.). Helping students understand the biases in generative AI. https://cte.ku.edu/addressing-bias-ai

Vygotsky, L. S. (1978). Mind in society: The development of higher psychological processes. Harvad University Press.

Walter, Y. (2024). Embracing the future of artificial Intelligence in the classroom: The relevance of AI literacy, prompt engineering, and critical thinking in modern education. International Journal of Educational Technology in Higher Education, 21, Article 15. https://doi.org/10.1186/s41239-024-00448-3

Yildirim, D., & Seferoglu, S. S. (2021). Evaluation of the effectiveness of online courses based on the community of inquiry model. Turkish Online Journal of Distance Education, 22(2), 147–163. https://doi.org/10.17718/tojde.906834

Zhai, C., Wibowo, S., & Li, L. D. (2024). The effects of over-reliance on AI dialogue systems on students’ cognitive abilities: A systematic review. Smart Learning Environments, 11, Article 28. https://doi.org/10.1186/s40561-024-00316-7